Algorithms to Antenna: Train Deep-Learning Networks with Synthesized Radar and Comms Signals

Modulation identification is an important function for an intelligent receiver. There are numerous applications in cognitive radar, software-defined radio (SDR), and efficient spectrum management. To identify communications and radar waveforms, it’s necessary to classify their modulation type. DARPA’s Spectrum Collaboration Challenge highlights the need to manage the demand for a shared RF spectrum. Here, we show how you can exploit learning techniques in these types of applications to effectively identify modulation schemes.

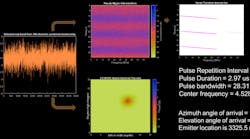

Figure 1 shows an electronic-support-measures (ESM) receiver and an RF emitter to demonstrate a simple scenario in which this type of application can be applied.

1. Shown is an electronic support measures (ESM) and RF emitter scenario.

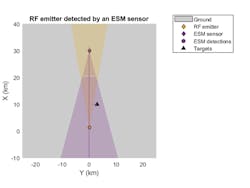

The signal processing required to pull signal parameters out of the noise are well-established. For example, you can model a system that performs signal parameter estimation in a radar warning receiver. The processed output of this type of system is shown in Figure 2. Typically, a wideband signal is received by a phased array and signal processing is performed to determine parameters that are critical to identifying the source, including direction and location.

2. It’s possible to model a system that performs signal parameter estimation in a radar warning receiver.

As an alternate approach, deep-learning techniques can be applied to obtain improved classification performance.

Applying Deep-Learning Techniques

Modulation identification is challenging because of the range of waveforms that exist in any given frequency band. In addition to the crowded spectrum, the environment can be harsh in terms of propagation conditions and non-cooperative interference sources. Adding to the challenge are questions such as:

- How will these signals present themselves to the receiver?

- How should unexpected signals, which haven’t been received before, be handled?

- How do the signals interact/interfere with each other?

Machine- and deep-learning techniques can be applied to help with the challenge. You can make tradeoffs between the time required to manually extract features to train a machine-learning algorithm and the large data sets required to train a deep-learning network.

Manually extracting features can take time and will require detailed knowledge of the signals. On the other hand, deep-learning networks need large amounts of data for training purposes to ensure the best results. One benefit of using a deep-learning network is that less preprocessing work and less manual feature extraction are required.

The good news is you can generate and label synthetic, channel-impaired waveforms. These generated waveforms provide training data that can be used with a range of deep-learning networks (Fig. 3).

3. Modulation identification workflow with deep learning: When using a deep-learning network, less preprocessing work and less manual feature extraction are required.

Of course, data can also be generated from live systems, but this data may be challenging to collect and label. Keeping track of waveforms and syncing transmit and receive systems often results in difficult-to-manage large data sets. It’s a challenge to also coordinate data sources that are not geographically co-located, including tests that span a wide range of conditions. In addition, labeling this data as it’s collected (or after the fact) requires lots of work because ground truth may not always be obvious.

Synthesizing Radar and Communications Waveforms

Let’s look at a specific example with the following mix of communications and radar waveforms:

Radar

- Rectangular

- Linear frequency modulation (LFM)

- Barker code

Communications

- Gaussian frequency shift keying (GFSK)

- Continuous phase frequency shift keying (CPFSK)

- Broadcast frequency modulation (B-FM)

- Double-sideband amplitude modulation (DSB-AM)

- Single-sideband amplitude modulation (SSB-AM)

We programmatically generate thousands of I/Q signals for each modulation type. Each signal has unique parameters and is augmented with various impairments to increase the fidelity of the model. For each waveform, the pulse width and repetition frequency are randomly generated. For LFM waveforms, the sweep bandwidth and direction are randomly generated. For Barker waveforms, the chip width and number are randomly generated.

All signals are impaired with white Gaussian noise. In addition, a frequency offset with a random carrier frequency is applied to each signal. Finally, each signal is passed through a channel model. In this example, a multipath Rician fading channel is used, but others could be swapped in place of the Rician channel.

The data is labeled as it’s generated in preparation to feed the training network. To improve the classification performance of learning algorithms, a common approach is to input extracted features in place of the original signal data. The features provide a representation of the input data that makes it easier for a classification algorithm to discriminate across the classes. We compute a time-frequency transform for each modulation type. The downsampled images for one set of data are shown in Figure 4.

4. Time-frequency representations of radar and communications waveforms are illustrated.

These images are used to train a deep convolutional neural network (CNN). From the data set, the network is trained with 80% of the data and tested with 10%. The remaining 10% is used for validation.

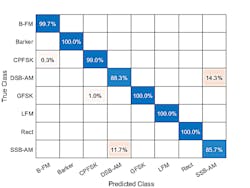

On average, over 85% of AM signals were correctly identified. From the confusion matrix (Fig. 5), a high percentage of DSB-AM signals were misclassified as SSB-AM and vice versa.

5. The confusion matrix with the results of the classification reveals that a high percentage of DSB-AM signals were misclassified as SSB-AM and vice versa.

The framework for this workflow enables you to investigate the misclassifications to gain insight into the network’s learning process. DSB-AM and SSB-AM signals have a very similar signature, explaining in part the network’s difficulty in correctly classifying these two types. Further signal processing could make the differences between these two modulation types clearer to the network and result in improved classification.

This example shows how radar and communications modulation types can be classified by using time-frequency signal-processing techniques and a deep-learning network.

Using Software Defined Radio

Let’s look at another example in which we use data from a software-defined radio for the testing phase.

For our second approach, we generate a different data set to work with. The data set includes the following 11 modulation types (eight digital and three analog):

- Binary phase-shift keying (BPSK)

- Quadrature phase-shift keying (QPSK)

- 8-ary phase-shift keying (8-PSK)

- 16-ary quadrature amplitude modulation (16-QAM)

- 64-ary quadrature amplitude modulation (64-QAM)

- 4-ary pulse amplitude modulation (PAM4)

- Gaussian frequency-shift keying (GFSK)

- Continuous-phase frequency-shift keying (CPFSK)

- Broadcast FM (B-FM)

- Double-sideband amplitude modulation (DSB-AM)

- Single-sideband amplitude modulation (SSB-AM)

In this example, we generate 10,000 frames for each modulation type. Again, 80% of the data is used for training, 10% for testing, and 10% for validation.

For digital-modulation types, eight samples are used to represent a symbol. The network makes each decision based on single frames rather than on multiple consecutive frames. Similar to our first example, each signal is passed through a channel with AWGN, Rician multipath fading, and a clock offset. We then generate channel-impaired frames for each modulation type and store the frames with their corresponding labels.

To make the scenario more realistic, a random number of samples are removed from the beginning of each frame to remove transients and make sure that the frames have a random starting point with respect to the symbol boundaries. The time and time-frequency representations of each waveform type are shown in Figure 6.

6. Shown are examples of time representation of generated waveforms (top) and corresponding time-frequency representations (bottom).

In the previous example, we transformed each of the signals to an image. For this example, we apply an alternate approach in which the I/Q baseband samples are used directly without further preprocessing.

To do this, we can use the I/Q baseband samples in rows as part of a 2D array. Here, the convolutional layers process in-phase and quadrature components independently. Only in the fully connected layer is information from the in-phase and quadrature components combined. This yields a 90% accuracy.

A variant on this approach is to use the I/Q samples as a 3D array in which the in-phase and quadrature components are part of the third dimension (pages). This approach mixes the information in the I and Q even in the convolutional layers and makes better use of the phase information. The variant yields a result with more than 95% accuracy. Representing I/Q components as pages instead of rows can improve the accuracy of the network by about 5%.

As the confusion matrix in Figure 7 shows, representing I/Q components as pages instead of rows dramatically increases the ability of the network to accurately differentiate between 16-QAM and 64-QAM frames and between QPSK and 8-PSK frames.

7. The confusion matrix of results with I/Q components as pages indicates the dramatically increased ability of the network to accurately differentiate between 16-QAM and 64-QAM frames and between QPSK and 8-PSK frames.

In the first example, we tested our results using synthesized data. For the second example, we employ over-the-air signals generated from two ADALM-PLUTO Radios (shown in Figure 8, where one is used as a transmitter and one is a receiver). The network achieves 99% overall accuracy when two radios are stationary and configured on a desktop. This is better than the results obtained for synthetic data because of the configuration. However, the workflow can be extended for radar and radio data collected in more realistic scenarios.

8. This is the SDR configuration using ADALM-PLUTO Radios. One is used as a transmitter and one is a receiver.

Figure 9 shows an app using live data from the receiver. The received waveform is shown as an I/Q signal over time. The Estimated Modulation window in the app reveals the probability of each type as predicted by the network.

9. Shown is the Waveform Modulation Classifier app with live data classification. Here, the received waveform is an I/Q signal over time.

Frameworks and tools exist to automatically extract time-frequency features from signals. These features can be used to perform modulation classification with a deep-learning network. Alternate techniques to feed signals to a deep-learning network are also possible.

It’s possible to generate and label synthetic, channel-impaired waveforms that can augment or replace live data for training purposes. These types of systems can be validated with over-the-air signals from SDRs and radars.

To learn more about the topics covered in this blog, see the examples below or email me at [email protected].

- Radar Waveform Classification Using Deep Learning (example): Learn how to classify radar waveform types of generated synthetic data using the Wigner-Ville distribution (WVD) and a deep CNN.

- Radar Target Classification Using Machine Learning and Deep Learning (example): Learn how to classify radar returns with both machine- and deep-learning approaches.

- Modulation Classification with Deep Learning (example): Learn how to use a CNN for modulation classification. You generate synthetic, channel-impaired waveforms. Using the generated waveforms as training data, you train a CNN for modulation classification. Then you test the CNN with SDR hardware and over-the-air signals.

- Deep Learning for Signals (video): Learn how you can use techniques such as time-frequency transformations and wavelet scattering networks in conjunction with CNNs and recurrent neural networks to build predictive models on signals.

See additional 5G, radar, and EW resources, including those referenced in previous blog posts.