Newer cellular network technologies like Long Term Evolution (LTE) and Long Term Evolution Advanced (LTE-A) are becoming the most widely used cellular technologies worldwide. The reasons behind this trend were discussed in the first part of this article series, titled “Cellular Networks Get Primed for Tomorrow.” Essentially, these newer wireless network technologies provide better use of the carriers’ most valuable asset (their spectrum licenses). The adoption of these standards—coupled with consumers’ increasing desire for higher peak and sustained data rates—will result in many transitions to the cellular networks and how they are configured and deployed.

A key consideration with LTE and LTE-A is that the available user throughput scales with the quality of the signal (as measured by signal-to-noise ratios), as well as the number of simultaneous users who wish to share the resource. With this fundamental physical restriction, operators will attempt to provide much smaller cell footprints than were the previously the standard. The push to denser and smaller networks, which maintain high quality-of-service (QoS) levels while serving less simultaneous users, will result in multiple trends over the next few years.

In addition to technical constraints, the business needs of organizations are a significant factor in looking at how network topology will change in the coming years. While many carriers want to improve the coverage area and service levels received by their customers, they do not want to lose potential revenue in the process. This sometimes puts the technology at odds with what the carriers and other stakeholders are willing to deploy, regardless of what can be deployed in theory. Perhaps the use of WiFi with cellular—especially to handle voice traffic—is the best example of this.

Cost of Equipment Dropping

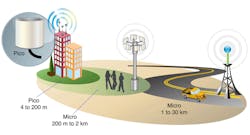

In terms of both equipment and layout, the network of tomorrow will have to factor in and resolve all of these issues. Financially, for example, the spread of lower-cost equipment and services creates the possibility of having many more network sites than previous budgets would have permitted. A typical “macrocell” covers a radius of 1 to 30 km.1 In 1990, that installation would have cost a provider over $450,000.2 In 2010, a base station could be deployed for about $40,000 in equipment cost (a savings of over 90%). This decrease in cost will allow operators to cover the same area (about 706 km2) with 10 times as many cell sites utilizing the same capital budget. Each of the resulting cell sites would be a microcell (radius of coverage between 200 m and 2 km), allowing the same channel resources to be reused 10 times as frequently (known as frequency reuse—a key consideration in cellular planning). If the area being covered by the network has a uniform distribution of potential users, each user can be provided 10 times the peak and average data rate than would be possible with the larger network coverage areas.

Of course, deploying more dense cellular networks also comes with a few disadvantages. The most notable is the requirement for more cell-to-cell handovers for users in motion, which drives up the intra-network communication requirements and increases the risk of dropped calls. For Internet Protocol (IP) -based network topologies, such as LTE, one of the advantages is that it is more robust to have momentary connectivity disconnects by use of higher-level protocols for most traffic types. Such disconnects provide for signal buffering. With IP-based networks, a mobile user also can connect to multiple base stations simultaneously while on the edge of service, providing for completely seamless handover of the communication. Some third-generation (3G) cellular protocols did allow for this type of handover (known in the industry as a “soft” handover). But the newer, IP-focused protocols are much more optimized to leverage the real channel behavior and issues involved.

In practice, providers are taking advantage of decreasing capital costs to overlay heterogeneous network coverage. Often, they are providing for a larger “macrocell” (that covers a whole region) with inlaid smaller “microcells” (that provide additional user-shared bandwidth for the higher-demand areas within their service territories). This coverage overlay provides the end user with continuous coverage and, in most places, allows them to consume the higher throughput as well. By using this type of deployment technique, operators are able to encourage the highest rate of service use, improve upon the overall perception of service quality, and thus maximize the revenue generated by each user of their network.

Picocells by Providers

In addition to deploying denser networks, most of the major providers3 now allow their customers to buy “picocells” (often marketed as femtocells when described to cover a single building). They have a range of 4 to 200 m, which is sufficient to cover the users’ own homes and offices with even more throughput. In most of these cases, the customers buy a device, such as Samsung’s Network Extender by Verizon4 or AT&T’s 3G Microcell5, from the provider. Some providers charge only for the equipment, while others add a monthly fee for its use. In either case, the customers are helping the providers finance the deployment of better coverage.

A hidden cost with this service is that the customers must provide their own broadband Internet connection to use these devices. This aspect adds system complexity, as the data and voice traffic must be routed through a third-party network (such as a cable provider’s) system. As most homes and offices have Internet connections, this cost isn’t often perceived by the customer. But it is a benefit to the provider that does not have to provide the capital investment in the last-mile connection.

On the negative side, numerous other issues need to be overcome once another technology or transport is introduced—especially one out of the provider’s direct control. Largely for these reasons, some of the user reviews have been unfavorable as the carriers figure out how to deploy and manage the service.

High-Rate Data Backhaul

For a cellular network to provide calls and connections outside of the direct tower in which the device is being serviced, the operator must provide (or have access to) a way to route the data back to the following: switching centers, public switched telephone network (PSTN), and the other sources of data the user desires to access (e.g., Internet services and servers).

With the proliferation of higher-data-rate services, such as LTE and LTE-A, the throughput requirements of these links—and the efficiency of routing the traffic—become increasingly complex. Originally, many towers had a T1 (or perhaps T3) connection to the switching centers, which provided between 1.5 and 4.5 Mb/s. Today, an 8-T1 connection is considered the standard for a carrier’s link to a tower (providing 12 Mb/s). In the last four years, for example, AT&T claims to have increased its traffic by more than 8,000%.6 By 2014, NPD has predicted that Ethernet over Fiber will become the dominant technology, accounting for over 85% of base-station usage. Carriers are presently requesting over 50 Mb/s per tower and 100 Mb/s or more in some urban areas.

Use of Repeater Nodes

When suitable backhaul is not available, a repeater node can still be used to improve coverage. This approach uses the provider’s licensed spectrum or other available spectrum to backhaul user data to the main network. Repeater nodes have the disadvantage of consuming spectrum for backhauling data, which otherwise could be dedicated to service users. The advantage, however, is that it allows the operator to cover harder-to-reach areas, where fiber data rates cannot be sustained. Such nodes also are very useful on the edge of cellular coverage to extend that coverage.

In LTE-A, relay nodes (RNs) use a slightly different part of the LTE-A air interface (defined as Un) than that used by normal terminals (known as Uu). As a result, the RN can communicate with the donor/anchor base station (Donor eNB) for backhaul purposes and provide a local terminal with its expected Uu link. RNs can use the same frequency or a different frequency for communication with the donor base station and terminals.

WiFi as a Possible Substitute

In addition to using cellular networks, some providers are using WiFi as a way to extend their coverage areas—especially in buildings and at home on private networks. T-Mobile, for example, markets using WiFi to make calls as a feature available to its customers when they are within suitable coverage.7 When using this feature, however, the customer does consume monthly plan minutes, which means that T-Mobile is able to generate revenue from providing this support. By using multiple technologies (WiFi and GSM or WCDMA) as a transport layer, the carrier is able to deploy a semi-heterogeneous network that massively extends the reach of its coverage while leveraging the host’s data backhaul. Keeping this solution from being fully heterogeneous (seamless) is that, in many cases, the user is not able to handover (or transfer) a call from WiFi to the cellular network while on a call. As with picocells, the quality of service is more problematic here than it is on the native network.8 Yet this technique does provide service when the customer otherwise would likely not be able to use the phone.

In addition to the intentional-provider-enabled overlaying of WiFi with cellular, many users are electing to use Skype (or similar products) on their smartphones to make voice calls on WiFi. When not enabled directly by the provider—either through picocells or directly enabled as a service on the phone—the user may have to deal with disadvantages like lower call quality or, in some cases, having to use a different phone number. Yet those issues are presumably negated by the savings in minutes and data that consumers use from their existing cellular plans. Beyond the use of Skype-like products, many more use WiFi on their smartphones for e-mail and browsing the Internet whenever possible for similar reasons.

There are some efforts in the industry9 to improve the LTE standard to coexist with WiFi while providing better quality of service and seamless handover between WiFi and cellular networks, forming a true heterogeneous network. Until the industry standardizes how WiFi and LTE or LTE-A are to interact, however, solutions will generally be limited to vendor-specific, proprietary solutions. In the industry, there is considerable debate amongst carriers, Internet companies, and consumers on how beneficial it would be to allow seamless movement between cellular and consumer-owned WiFi equipment. Because carriers make their revenue by selling use of their licensed spectrum, a user moving from a network fully under their control to an open third-party network is understandably concerning. At least for the immediate future, it is my belief that the cellular industry will not integrate these technologies beyond use as a lower-level transport layer (such as the femtocells) or where forced by consumer choice or demand.

QoS Issues on the Backhaul

Service link quality is one of the most significant backhaul problems to be overcome in the deployment of cellular networks over IP networks outside of their direct control. Many IP-based networks were built and optimized around data communication, which is generally bursty and lacking sustained bandwidth. When a user loads a new web page, all of the content is downloaded at once (the words, pictures, and styles in use). On a site with numerous images and content, hundreds of megabytes may be downloaded to support that one page.

After the page is loaded, however, the link can remain essentially unused for seconds or minutes as the user reads and digests the content. With many video services (e.g., Netflix or Amazon Prime), the connection buffers seconds’ to minutes’ worth of data in the local device. That way, if connectivity is lost for a brief amount of time or another user wishes to make use of shared bandwidth, the end user’s experience is not impacted. They are unaware of the temporary loss of connectivity.

Yet real-time services like voice connections and camera feeds, which people expect from a cellular network, cannot rely on buffering. They are very impacted by a momentary loss of data connectivity or restricted and inconsistent data rates. Also of concern are items like latency (how long it takes a packet of information to get from point A to B) and jitter (how variable latency is during the connection’s use).

For cellular use over a commercial backhaul, the previously mentioned issues result in variable service quality to the users (if connectivity is even possible). They also impact the overall perception of quality for the end user. This issue is one of the two main reasons why the experiences that people have with femtocells and home networks often get much lower satisfaction reviews than the classical cellular network. In the cellular network, the carrier has designed the system around these concerns and can manage the end-to-end user experience. The other main reason is the interference and performance of the RF link—a topic that will be discussed in much more detail in the third installment to this series on optimization.

Newer IP networks have the ability to schedule their packets with differing priority. Increasingly, network operators are beginning to provide end-to-end support in their networks for this feature. When in place, this feature allows equipment to prioritize packets that contain latency- and throughput-sensitive information over general traffic.

This has gone a long way toward improving support for quality of service for many voice-over-IP (VoIP) users. When possible, it is directly leveraged by femtocell and other installations. With many last-mile providers trying to provide VoIP offerings to their customers, however, there is a natural economic push for them to limit these features to their own service offerings. They also would benefit from limiting others so that their own services have better quality competitive solutions, allowing them to charge more for the service. Equally important to note is that equipment capable of using scheduled packets is more expensive. This fact tends to push low-cost providers away from offering the additional features to their customers if their main target is data services.

A Customer-Assisted Network Deployment Model

With the focus switching from large macrocells to smaller cells, some cellular providers are creating ways to get their customers to assist in providing better service coverage and quality—to not just themselves, but other system users as well. Verizon’s Network Extenders, for example, prioritize connections made to the equipment owner. But they require that other Verizon phones be able to use the device when it is idle. With this business model, the consumer installing the device in a denser area, such as an apartment building, improves his or her service level (their primary goal) while benefiting from the perceived quality and experience of the neighboring units. As an increasing number of small-sized femtocell networks are deployed, and the quality of service provided by the backhaul connection is improved to provide sufficient quality, the attractiveness of this solution will improve in the network.

Wrap Up

In the first article in this series, the discussion focused on the newer protocols that provide bandwidth scalability based on user needs and how this was a function of signal to noise achievable on the RF channels. Throughout this article, the discussion centered on smaller cell sizes and the trends driving the industry to adopt them as well as the associated issues. In the final article in this series, I will synthesize and analyze these areas. I will then discuss the optimization of quasi-heterogeneous and full heterogeneous, which is the key to making the technology meaningful and usable.

References

1. http://www.wirelesscommunication.nl/reference/chaptr04/cellplan/cellsize.htm

2. http://www.md7.com/assets/001/5073.pdf

3. http://cellphones.lovetoknow.com/Wireless_Network_Extender

4. http://www.verizonwireless.com/b2c/device/network-extender

5. http://www.att.com/standalone/3gmicrocell/?fbid=EANznNrLGs0

6. http://www.ruraltelecom.org/march/april-2012/wired-networks-and-the-backhaul-bonus.html

7. http://support.t-mobile.com/docs/DOC-1680

8. http://support.t-mobile.com/thread/24785

9. http://www.engadget.com/2012/01/05/sk-telecom-heterogeneous-wireless-technology-100mbps/

About the Author

Tom Callahan

General Manager and CTO

Tom Callahan is the General Manager and CTO of QRC Technologies, a provider of network-discovery cellular test and measurement tools for military and government use. Prior to establishing QRC Technologies, Callahan was Vice President of Engineering for PCTEL’s RF Solutions group, which focused on building cellular scanning receivers. He previously worked as a DSP Engineer building signal-intercept systems for Watkins–Johnson. Callahan has a BSEE and MBA. He is an expert in the air interfaces for cellular protocols and has worked in numerous roles including as a DSP, software, and systems engineer. Callahan also is a Certified Program Management Professional (PMP).