11 Myths About GPGPU Computing

Two of the major difficulties facing modern embedded systems can be summed up in a few words: loss of calculation power and increased power consumption. Some of the primary culprits include the influx of data sources, continued technology upgrades, shrinking system size, and increasing densities within a system itself.

High-performance embedded computer (HPEC) systems have begun to utilize the specialized parallel computational speed and performance on general-purpose graphic processor units (GPGPUs), enabling system designers to bring exceptional power and performance into rugged, extended-temperature small form factors (SFFs).

GPU-accelerated computing combines a graphics processing unit (GPU) together with a central processing unit (CPU) to accelerate applications, and offload some of the computation-intensive portions from the CPU to the GPU.

It’s important to recognize that, as processing requirements continue to rise, the main computing engine—the CPU—will eventually choke. However, the GPU has evolved into an extremely flexible and powerful processor, and can handle certain computing tasks better, and faster, than the CPU, thanks to improved programmability, precision, and parallel-processing capabilities.

Having a deeper understanding of GPGPU computing, its limitations, as well as its capabilities can help you choose the best products that will deliver the best performance for your application.

1. GPGPUs are really only useful in consumer-based electronics, like graphics rendering in gaming.

Not true. As has been shown over the past few years, GPGPUs are redefining what’s capable in terms of data processing and deep-learning networks, as well as shaping expectations in the realm of artificial intelligence. A growing number of military and defense projects based on GPGPU technology have already been deployed, including systems that are using the advanced processing capabilities for radars, image recognition, classification, motion detection, encoding, etc.

2. Because they’re “general purpose,” GPUs aren’t designed to handle complex, high-density computing tasks.

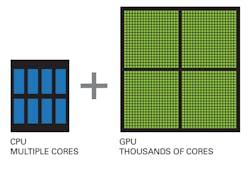

Also not true. A typical powerful RISC or CISC CPU has tens of “sophisticated” cores. A GPU has thousands of “specialized” cores optimized to address and manipulate large data matrices, such as displays or input devices and optical cameras (Fig. 1). These GPU cores allow applications to spread algorithms across many cores and more easily architect and perform parallel processing. The ability to create many concurrent “kernels” on a GPU—where each “kernel” is responsible for a subset of specific calculations—gives us the ability to perform complex, high-density computing.

1. While multicore CPUs provide enhanced processing, CUDA-based GPUs offer thousands of cores that operate in parallel and process high volumes of data simultaneously.

The GPGPU pipeline uses parallel processing on GPUs to analyze data as if it were an image or other graphic data. While GPUs operate at lower frequencies, they typically have many times the number of cores. Thus, GPUs can process far more pictures and graphical data per second than a traditional CPU. Using the GPU parallel pipeline to scan and analyze graphical data creates a large speedup.

3. GPGPUs aren’t rugged enough to withstand harsh environments, like down-hole well monitoring, mobile, or military applications.

False. The onus of ruggedization is actually on the board or system manufacturer. Many parts and components used in a harsh electronics environment aren’t rugged at manufacture, and it’s the same with GPGPUs. This is where knowledge of designing reliable systems comes into play, including which techniques will best mitigate the effects of things like environmental hazards as well as ensure systems meet specific application requirements.

Aitech, for example, has GPGPU-based boards and SFF systems that are qualified for, and survive in, a number of avionics, naval, ground, and mobile applications, thanks to decades of expertise that can be applied to system development. Space is the newest frontier the company is exploring for GPGPU technologies.

4. When processing requirements outpace system requirements, the alternatives require increased power consumption (i.e., buying more powerful hardware; over-clocking existing equipment).

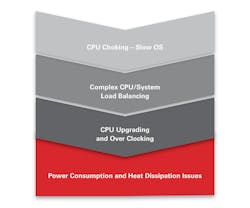

True. If the user is trying to avoid using GPGPU technology, they usually end up with CPU performance starvation. To try and solve this dilemma, additional CPU boards will typically be added, or existing boards will be over-clocked, leading to increased power consumption. In most cases, the result is a reduction in CPU frequency performance as well as the need for down-clocking to compensate for rising die temperatures.

5. Wouldn’t adding another processing engine just increase the complexity and integration issues within my system?

In the short term, maybe, since you need to account for the learning curve of working with any new, leading-edge technology. But in long run, no. CUDA has become a de facto computing language for image processing and algorithms. Once you build a CUDA algorithm, you can “reuse” it on any different platform supporting an NVIDIA GPGPU board. Porting it from one platform to another is easy, so the approach becomes less hardware-specific and therefore more “generic.”

6. As these GPGPU-based systems process extremely large amounts of data, it drives up power consumption.

Not really. GPGPU boards today are very power-efficient. Power consumption on some GPGPU boards today is the same as on CPU boards. However, GPGPU boards can process far more parallel data using thousands of CUDA cores. So, the power-to-performance ratio is what’s affected in a very positive way. More processing for the same, and sometimes slightly less, power.

7. Certainly tradeoffs still exist between performance and power consumption.

Yes, of course, and these tradeoffs always will exist. Higher performance and faster throughputs require more power consumption. That’s just a fact. But these are the same tradeoffs you find when using a CPU or any other processing unit.

As an example, take the “NVIDIA Optimus Technology” being used by Aitech. It’s a computer GPU switching technology in which the discrete GPU handles all of the rendering duties and the final image output to the display is still handled by the RISC processor with its integrated graphics processor (IGP). In effect, the RISC CPU’s IGP is only being used as a simple display controller, resulting in a seamless, real-time, flicker-free experience with no need to place the full burden of both image rendering and generation on the GPGPU or share CPU resources for image recognition across all of the RISC CPU. This load sharing or balancing is what makes these systems even more powerful.

When less-critical or less-demanding applications are run, the discrete GPU can be powered off. The Intel IGP handles both rendering and display calls to conserve power and provide the highest possible performance-to-power ratio.

8. Balancing the load on my CPU can be accomplished with a simple board upgrade and will be sufficient enough to manage the data processing required by my system.

Not true. The industry is definitely moving toward parallel processing, adopting GPU processing capabilities, and there’s good reason for this. Parallel processing an image is the optimal task for a GPU—this is what it was designed for. As the number of data inputs and camera resolutions continue to grow, the need for a parallel-processing architecture will become the norm, not a luxury. This is especially the case for mission- and safety-critical industries that need to capture, compare, analyze, and make decisions on several hundred images simultaneously (Fig. 2).

2. As data inputs increase, the CPU’s ability to handle the processing, load balancing, and clocking requirements will not keep pace with system requirements.

9. Moore’s Law also applies to GPGPUs.

Yes, but there’s a solution. NVIDIA is currently prototyping a multichip-module GPU (MCM-GPU) architecture that enables continued GPU performance scaling, despite the slowdown of transistor scaling and photo reticle limitations.

At GTC 2019, discussions from NVIDIA regarding the MCM-GPU chip highlighted many technologies that could be applied to higher-level computing systems. These include mesh networks, low-latency signaling, and a scalable deep-learning architecture, as well as a very efficient die-to-die on an organic substrate transmission technology.

10. Learning a whole new programming language (i.e., CUDA) requires too much of an investment of time and money.

Actually, no. Currently CUDA is the de facto parallel computing language, and many CUDA-based solutions are already deployed, so many algorithms have already been ported to CUDA. NVIDIA has a large online forum with many examples, web training classes, user communities, etc. In addition, software companies are willing to help you with your first steps into CUDA. And in many universities, CUDA language is now part of the programming language curriculum.

Learning any new computing technology can seem daunting. However, with the resources available and the exponential outlook of GPGPU technology, this is one programming language where making the investment will be extremely worthwhile.

11. There are no “industrial grade” GPGPUs for the embedded market, especially SFF, SWaP-optimized systems.

Not true. NVIDIA has an entire “Jetson” product line that’s oriented for the embedded market (Fig. 3). It currently includes the following System on Modules (SoMs), each of which are small form factor and size, weight, and power optimized:

- TX1

- TX2

- TX2i—special “Industrial” edition for very “harsh” environments

- Xavier

3. Designed for both industrial as well as military-grade applications, GPGPUs are redefining SWaP-optimization and the expected performance of SFF systems. (Courtesy of Aitech Group)

In fact, NVIDIA has announced a longer lifecycle for the TX2i module, meaning that component obsolescence is less of a risk to longer-term programs, like aerospace, defense, and space, as well as several rugged industrial applications. Aitech has deployed many military and industrial projects and customer programs based on the above embedded family, with new applications coming forward every day.

References:

About the Author

Daniel Mor

Product Line Manager of GPGPU and HPEC computers

Dan Mor has been working with Aitech since 2009, a leading supplier of rugged computer systems optimized for harsh environments. He currently holds a position as Product Line Manager of GPGPU and HPEC computers. Mor leads the GPGPU and HPEC roadmaps and plays a key role in architecture design and strategy planning.