Evaluate Coexistence of LTE and S-Band Radar

This file type includes high resolution graphics and schematics when applicable.

Bandwidth is precious and limited—so much so that the evolution of microwave/wireless applications will involve careful planning and accurate measurements to avoid the overlapping of frequencies. For example, potential issues may arise with frequencies occupied by existing S-band radar systems and allocations for Long-Term-Evolution (LTE) cellular/wireless commercial-communications systems.

Ideally, the different systems will remain in their proper frequency bands and will not interfere with each other. But performance degradation and system malfunctions can occur, such as excessive spurious levels, that can cause problems. Proper measurement methodologies can help avoid such problems and ensure the coexistence of S-band radar systems and LTE networks.

Multiple applications within the same frequency band can face coexistence problems. As an example, consider the performance of LTE base stations and mobile devices and how they might have coexistence issues with recently allocated frequency bands defined by the Third Generation Partnership Project (3GPP) standard, 3GPP TS 36.101 (Release 13, December 2015). Proper measurements can determine whether interference exists, notably through in-field measurements at critical locations for both applications (such as airports).

The S-band frequency range has been defined by the IEEE as all frequencies between 2 to 4 GHz. Along with aviation and weather forecasting systems, a number of different maritime radar systems worldwide also operate at S-band frequencies. S-band radar systems include air-traffic-control (ATC) radars (typically between 2,700 and 3,100 MHz) and AN/SPY-1 Naval Air Surveillance Radar (ASR) systems operating between 3,100 and 3,500 MHz.

Coexistence is a concern for different S-band radar systems, especially in the U.S., because of LTE networks operating in Band 42, from 3,400 to 3,600 MHz; Band 43, from 3,600 to 3,800 MHz [with time-division-duplex (TDD) single-frequency operation for transmit and receive functions]; Band 7, from 2,620 to 2,690 MHz in the downlink and 2,500 to 2,570 MHz in the uplink; and Band 22, from 3,510 to 3,590 MHz in the downlink and 3,410 to 3,490 MHz in the uplink. Frequency-division-duplex (FDD) systems falling within this frequency range are also a concern for coexistence with radar systems at S-band frequencies.

While the number of proposed LTE bands has increased from 11 to more than 50 operational bands in the last four years, 150 MHz of spectrum in Bands 42 and 43 is anticipated for auction in the U.S. This spectrum, from 3,500 to 3,650 MHz, has been proposed for subdivision as 50 MHz for Tier 1 operators, 50 MHz for Public Safety use, and 50 MHz for Citizens Broadband Radio Service (CBRS). Any use of the spectrum by mobile radio networks must not disrupt primary users within the allocated spectrum, but no agreement of national and international authorities has been reached on guidelines to assess potential interference issues.

At the recent World Radio Congress 2015 (WRC 2015) in Geneva in November, 2015, Recommendation 75 (REV.WRC-15), “Study of the boundary between the out-of-band and spurious domains of primary radars using magnetrons,” proposes assessing the improvement of measurement methodologies and performance levels of radars.5 Also, recommendation 207 (REV.WRC-15), “Future International Mobile Telecommunications (IMT) Systems,” proposes a continuous study of the necessary technical, operational, and spectrum-related issues to meet objectives for developing future mobile telecommunication systems, taking into consideration requirements for other services.2

The FCC requires use of cognitive radio technologies and consultation of the national spectrum database, the Spectrum Access System (SAS), with this spectrum. Cognitive radio technologies are certainly not new, and detect and avoid (DAA), transmit power control (TPC), and dynamic frequency selection (DFS) techniques have been employed for many unlicensed radio technologies—including the IEEE 802.11 wireless-local-area-network (WLAN) standards—to avoid interference with weather radar and military applications in the 5-GHz band. However, licensed technologies, such as LTE, have not previously had to assess performance for coexistence with scanning radars.

Most of these types of radars apply pulse and pulse compression waveforms. After transmitting a pulse, the radar switches to receive mode to obtain radar echo pulses from illuminated targets. The high sensitivity needed to acquire low-level returning pulse echoes also makes radar receivers susceptible to interference signals. LTE networks using nearby frequencies can cause this interference and may significantly degrade radar performance. Developing capability for an LTE network or mobile device receiver to detect and avoid a scanning radar may also represent a significant challenge.

Spectrum Sharing

Disturbances to an LTE network can occur due to performance degradation of an S-band radar system, resulting in an increase in the LTE network’s block error rate (BLER). This loss of LTE network performance and poor spectral efficiency may not be a major drawback to a mobile communications network operator, but the reduction of power can result in an increase in operating costs to maintain the performance expected by mobile customers. The 3GPP specifications may define solutions for the problem (such as the use of dynamic frequency selection or transmit power control) that do not disturb other signals, but the challenge in developing a suitable receiver capable of detecting a scanning S-band radar should not be underestimated.

As an example, a radar scanning at a rate of 12 rpm with 2-deg. antenna beamwidth will illuminate a stationary target with the main beam for about 27 ms. For a typical pulse width of 6 μs and pulse repetition frequency (PRF) of 1 ms, the radar receiver will receive reflected signals from that target for 162 μs during this time, or about 2 ms target illuminations every minute. If the bandwidth and sensitivity of the receiver are considered, the system is also scanning over a frequency range of several megahertz.

For an S-band radar system, the RF emission bandwidth can range from several kilohertz to dozens of megahertz with peak transmission power of typically greater than +90 dBm (i.e., hundreds of megawatts of power). While a disturbance may occur for any on-channel event, an active channel transmission in an adjacent or alternative channel several tens of megahertz away may mitigate the impact to the network.

Mobile network devices employ standard RF channel filtering in line with 3GPP standards to minimize interference. However, measurement results show that LTE infrastructure equipment, such as base stations, can be impacted by radar signals and should be tested for compatible operation.

At the same time, LTE signals can also cause harm to S-band radar systems. A radar receiver may be designed to receive an echo return as low as -120 dBm. Per the 3GPP TS 36.101 and TS 36.104 standards, LTE base stations are allowed to transmit a maximum of +46 dBm with additional antenna gain of approximately 15 dBi. Mobile devices are allowed to operate with maximum transmission power of to +23 dBm. An exception allows mobile devices to operate at transmit power of +31 dBm in the Band 14 (the Public Safety band in the 700-MHz spectrum in the U.S.).

While power limits for Bands 42 and 43 for the 50-MHz Public Safety allocation have yet to be determined, there is a good chance that these power levels will be substantially higher than +23 dBm. In collocated bands, LTE system levels above -120 dBm can impact the probability of detection and the gain control of an S-band radar receiver. The receiver may be driven into compression, resulting in nonlinear responses or a rise in the constant false alarm rate (CFAR) threshold. Radar targets may be lost or left undetected.

Fortunately, test solutions have been developed based on recorded and simulated signals representing the LTE and radar signals. Using realistic test signals, the functionality of both systems can be verified and mitigation techniques developed to minimize the effects of interference.

The 3GPP has defined multiple test cases for three LTE test areas—protocol/signaling, mobility, and radio resource management (RRM)—as well as for RF conformance. Such testing ensures minimum compliance with the current 3GPP standard. However, the majority of receiver and performance tests for LTE assume the presence of only additional LTE or 3G signals, such as wideband code-division-multiple-access (WCDMA) signals as part of the Universal Mobile Telecommunications System (UMTS). The 3GPP has not defined tests for the presence of a radar signals in adjacent frequencies to the received signal from an LTE base station.

To test LTE system operation, it would be beneficial to record an S-band radar signal and play it back on an adjacent frequency while performing a throughput test or receive sensitivity test. A universal network scanner can be used to record ATC radar signals, with in-phase/quadrature (I/Q) data stored in memory and replayed for testing on a vector signal generator (VSG). As shown in refs. 3, 4, and 5, coexistence issues have occurred when an LTE-capable terminal with an active data connection comes close to an S-band radar signal.

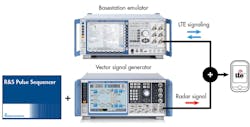

One alternative to recording and playing back radar signals for testing LTE coexistence capability is the computer-based synthesis of signals representing a challenging RF environment. This cost-effective approach (Fig. 1) allows defining a radar signal, antenna pattern, and radar position in software without on-site testing.5 Essentially, test signal conditions are generated in software and then replayed on a VSG.

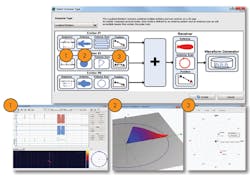

As an example, pulse sequencer software5 was used to generate a sequence of consecutive pulses without modulation, followed by a pulse with linear frequency modulation (LFM)(Fig. 2) The measurement approach convolves the test signal into a cosecant-square antenna pattern (1 in Fig. 2) and rotational motion (2 in Fig. 2) to form a pulse signal directional position north and east of the receiver under test (3 in Fig. 2).

This test software is not limited to radar signals; it can also define unlimited types of arbitrary signal sequences and antenna patterns. Signals created in software are then calculated as if they would appear at a receiver under test and replayed with a VSG. In this way, a receiver’s baseline performance can be compared to its performance in the presence of a disturbing signal. The reproducible test setup can define an operating environment according to expected performance levels, expensive, time-consuming on-site trials.

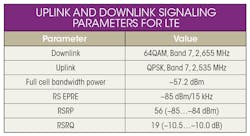

The measurement approach was used to create a representative radar signal, a pulsed chirp signal with 20-MHz LFM, 72-μs on time, and 1-ms PRI. A representative, commercially available mobile phone was connected to a model CMW500 base station emulator in a shielded box with LTE signaling parameters defined in the table and in ref. 4. The base station emulator was set to its “Follow Wideband” signaling mode with a 10-MHz signal bandwidth. In this mode, the LTE mobile phone can track downlink channel quality using embedded reference signals.

The measured signal receive quality is translated to a Channel Quality Indicator (CQI) value that is reported back to the LTE network. The scheduler in an LTE base station can use the CQI information to set the resource allocation, modulation, and coding scheme based on the channel conditions seen by the mobile phone. With any interference present, the mobile phone would measure lower received signal quality, resulting in a lower modulation and coding scheme adopted by the base station.

To evaluate the downlink throughput of the mobile phone, the pulsed radar test signal was varied in frequency and power; the downlink throughput was measured after each change. An average throughput value of a 100,000-subframe measurement was plotted versus frequency and disturbing signal power for analysis (Fig. 3). The throughput decreases almost constantly depending upon frequency and power. The impact of the disturbing signal is apparent even when it is offset 10 MHz from the downlink center frequency. The BLER is plotted on the right side of Fig. 3, with 100% BLER representing no useful signal and lower BLER showing more useful signals present.

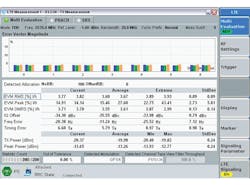

The test setup was changed to evaluate uplink performance for the mobile phone, using the base station emulation to evaluate error-vector-magnitude (EVM) performance. The LTE measurement mode was FDD with an uplink frequency of 2535 MHz and bandwidth of 20 MHz. Figure 4 shows peak and average EVM on the base station emulator display screen. Under normal conditions, the average EVM is 3.67%. By varying the power level of an interfering pulse signal, the average RMS EVM for the uplink channel can be evaluated.

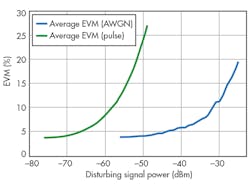

Figure 5 compares the effects of a pulse signal and 20-MHz-wide average white Gaussian noise (AWGN) on the uplink channel. Any disturbing signal greater than -48 dBm results in the uplink channel being “out of sync.” Any disturbing signals lower than this level increase the uplink EVM. The AWGN requires less power than the pulse signal to cause disruption of the uplink performance, since it is a continuous-wave (CW) signal compared to a pulse with 0.5% duty cycle.

This measurement approach provides a practical means for evaluating the coexistence of S-band radar systems with LTE mobile terminals: The test system can evaluate the effects of LTE signals on the radars as well as the pulsed radar signals on LTE network performance.3 The test results achieved in the laboratory correlate well with in-field test data, making it possible to use this test methodology to help test and plan networks that can coexist with incumbent radar infrastructure systems.

Dr. Steffen Heuel, Technology Manager

Test & Measurement Division

Rohde & Schwarz, Muehldorfstrausse 15, 81671, Munich, Germany

References

1. 3.5 GHz Spectrum Access Workshop.

2. Provisional Final Acts World Radio Communications Conference (WRC-15), Geneva, Switzerland, November 2-27, 2015.

3. realWireless, Ofcom, Final Report – Airport Deployment Study Ref MC/045, Version 1.5, July 19, 2011.

4. Coexistence Test of LTE and Radar Systems, Application Note, March 2014.

5. S. Heuel and A. Roessler, “Co-Existence Tests for S-Band Radar and LTE Networks,” Microwave Journal, November 2015.

This file type includes high resolution graphics and schematics when applicable.